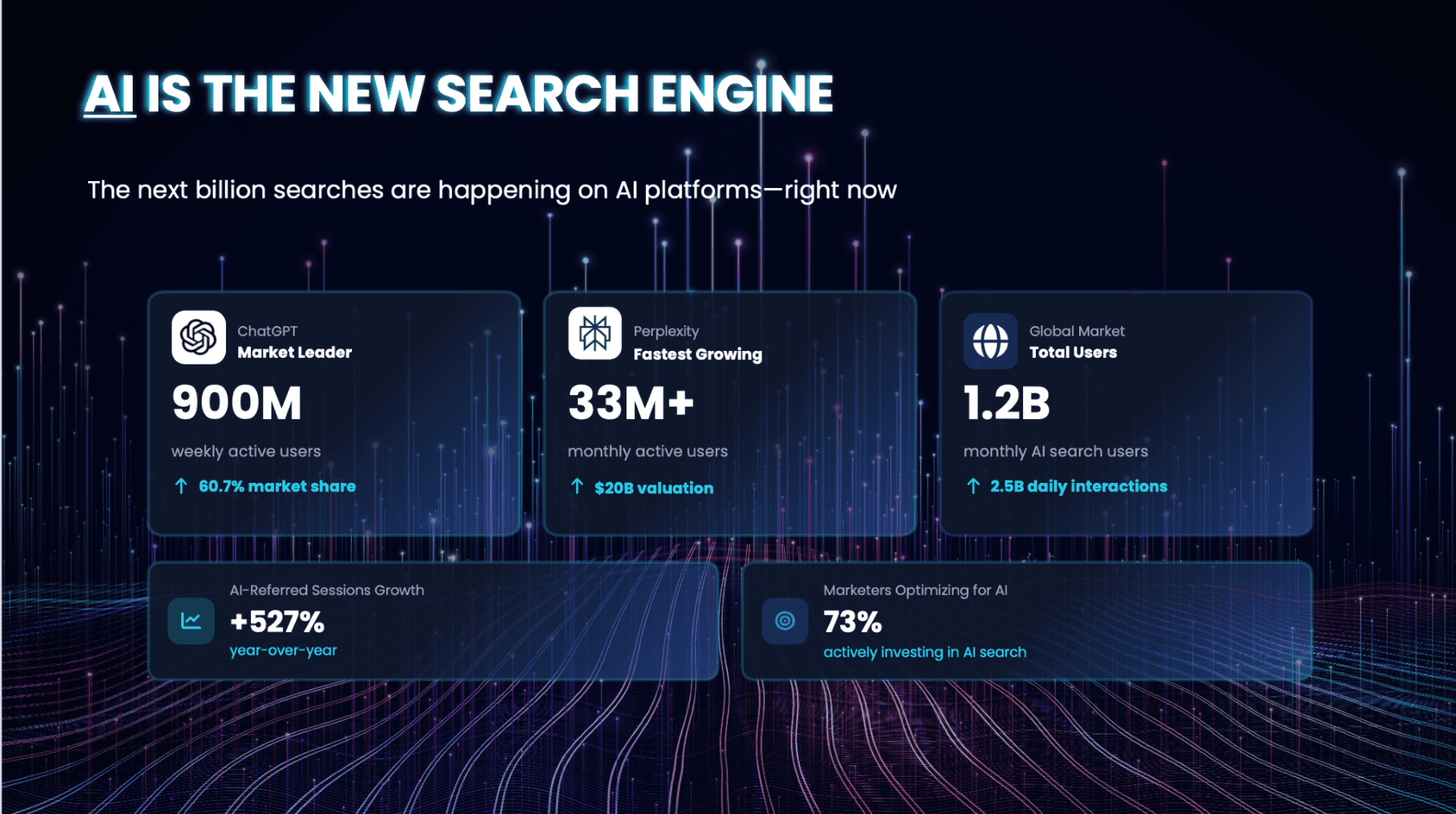

Most AI crawler traffic is not what it looks like. According to Q1 2026 analysis of Cloudflare Radar data, approximately 89% of all AI bot activity serves model training or mixed purposes, with only around 8% classified as search-related retrieval. The bot visiting your site is overwhelmingly likely to be building training data, not sourcing citations, and it will not automatically send anything back. ClaudeBot currently crawls over 20,000 pages for every single referral it returns, a ratio that makes the disconnect between crawl volume and citation output concrete.

If you have been watching AI bot traffic in your server logs and treating it as a signal of AI visibility, you are optimising for the wrong thing. Crawling and citation are separate events, governed by entirely different criteria. Conflating them is where most GEO strategies break down before they have even started.

This guide provides a practical diagnostic framework for identifying which of three failure points is causing the gap between what AI bots consume on your site and what AI platforms actually surface about your brand. It covers what to check, what the signals mean, and how to connect your crawler data to your citation performance in a way that is repeatable over time.

The gap between crawling and being considered

Why being crawled does not mean being considered

When an AI crawler visits a page on your site, it is collecting raw data. That data feeds into a process, or series of processes, that is entirely separate from the decision to cite your brand in a response. The crawler is not evaluating whether your content deserves to appear. It is ingesting text that may, at some point downstream, inform a model update, a retrieval index, or a response generation pipeline. Those are different systems, operating on different timescales, with different criteria for what they surface.

Most SEO experts treat high crawler volume as a positive signal, which it is, but only in the sense that it confirms access. A site that is never crawled has no chance of citation. A site that is crawled frequently but never cited has a different problem entirely, and that problem requires a different diagnosis.

The Challenge

Most brands with active crawler traffic assume visibility will follow automatically. The volume of bot visits can feel like a proxy for progress, but it rarely is. Citation depends on criteria that sit entirely outside the crawl layer, and without a structured diagnostic, you cannot tell which layer is failing.

The Approach

The framework below diagnoses which of three distinct failure points is causing the gap. Working through each gate in sequence prevents the common mistake of optimising content structure when the underlying problem is a technical access block or an entity recognition gap.

The three gates

Citation gaps are rarely caused by a single factor. In practice, there are three distinct points at which a brand can drop out of the AI citation pipeline. Each has different symptoms, different diagnostic approaches, and different fixes. The order matters, if Gate 1 is blocked, working on Gate 3 will produce nothing.

Gate 1: Technical access

The first gate is the most straightforward to diagnose. It covers the infrastructure-level decisions that determine whether AI crawlers can reach your content at all. A brand can have strong entity recognition and well-structured content, but if robots.txt is inadvertently disallowing major AI user-agents, or if key pages rely on client-side JavaScript that bots cannot execute, none of that matters.

JavaScript rendering is a significant and widely underestimated issue. A site built on React or Angular can rank at the top of Google while being entirely invisible to the systems that power ChatGPT and Claude. Googlebot has executed JavaScript since 2019. Major AI crawlers do not.

CDN and WAF configuration is a separate failure point. Cloudflare Bot Management and similar security tools can flag AI crawlers as malicious bots and return 403 responses that your robots.txt cannot override. These blocks are often invisible unless you are actively reading your log files, because they produce no signal in GA4 or standard analytics.

Quick diagnostic

- Check robots.txt for rules that block GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, and Google-Extended. Broad Disallow: patterns aimed at generic bots often catch AI agents unintentionally.

- Disable JavaScript in your browser and load your key pages. If your content disappears, GPTBot and ClaudeBot cannot see it either. Configure server-side rendering or dynamic rendering for pages you want AI to cite.

- Review server logs for 403 or 429 responses to known AI user-agent strings. These indicate CDN or WAF-level blocking that robots.txt will not fix.

- Check server response times for your most-crawled URLs. Slow responses reduce indexing quality, as crawlers operating under budget constraints deprioritise slow hosts, which reduces visit frequency and the recency of data they hold about your site.

Gate 2: Entity recognition

Before an AI system will cite your brand confidently, it needs a coherent picture of what your brand is. This goes beyond whether your site has been crawled. It is about whether the model has enough consistent, corroborating information across multiple sources to build a reliable entity understanding.

Entity confusion tends to manifest in predictable ways, a brand appears in responses with incorrect attributes, gets conflated with a similarly named competitor, or simply does not appear in categories where it should be an obvious candidate. The cause is usually an incomplete or inconsistent entity footprint. Your website may describe your brand one way, your Google Business Profile another, third-party review platforms another. Those inconsistencies compound silently across training cycles.

Quick diagnostic

- Run your brand name as a prompt across ChatGPT, Perplexity, and Google AI Overview. Ask each one to describe what your brand does, who it serves, and what it is known for. Note discrepancies between platforms and between AI responses and your own positioning.

- Check that Organisation schema is implemented on your homepage and key landing pages, with consistent name, URL, logo, and sameAs values pointing to your verified profiles.

- Audit consistency across Wikipedia (if applicable), LinkedIn, Wikidata, Crunchbase, and major industry directories. The name, description, founding date, and category should match.

- Look for off-site coverage that references your brand in the context you want to own. AI systems weight third-party corroboration heavily when building entity understanding

Gate 3: Content structure for extraction

RAG (Retrieval-Augmented Generation) systems do not retrieve pages. They retrieve chunks. A typical chunk is somewhere between 200 and 500 tokens, and the retrieval decision is made at the chunk level, not the page level. This means that even if your content reaches the retrieval pipeline, whether it gets surfaced depends on whether individual passages are self-contained enough to be extracted and cited without surrounding context.

Most GEO optimisation work happens here, which is not wrong, but it is often applied without checking Gates 1 and 2 first. Fixing content structure for a brand that AI cannot confidently identify, or whose key pages are blocked to crawlers, produces marginal results at best.

Quick diagnostic

- Check that key passages open with a declarative statement that can stand alone. A paragraph beginning ‘As mentioned above’ or ‘Building on this’ cannot be extracted cleanly.

- Review your FAQ and structured Q&A content. These formats map directly to how RAG systems retrieve and present information.

- Audit heading hierarchy for clarity and specificity. Headings function as retrieval labels. A heading that reads ‘Our approach’ tells a retrieval system very little. ‘How we handle hreflang for multilingual e-commerce sites’ is extractable.

- Check for FAQ schema, HowTo schema, and Speakable schema on pages where you want AI citation. These are not guarantees, but they reduce retrieval ambiguity.

- Look for data specificity. Vague claims (‘we help businesses grow’) are rarely cited. Specific, verifiable statements (‘reduces crawl waste by 40% on average’) are far more likely to appear in AI responses.

From diagnosis to ongoing monitoring

Building your baseline

Before you fix anything, you need to know where you stand. A baseline takes under an hour to establish and gives you a reference point against which future changes can be measured.

Start by running a set of brand and category queries across ChatGPT, Perplexity, and Google AI Overview. Include direct brand queries, comparative queries against named competitors, and category queries where you expect to appear but are not named. Record the responses, whether you are cited, how you are described, which URLs are referenced, and whether competitors appear instead.

Cross-reference this against your server logs. Pull the AI user-agent strings from the past 30 days and map which URLs each crawler visited, what response codes were returned, and what the response times were. Overlay this against the URLs that appeared, or did not appear, in your AI query responses. That gap is your diagnostic starting point.

What your log file is actually telling you

GA4 is blind to AI crawler visits. Because AI fetchers do not trigger client-side JavaScript tracking, their visits produce no sessions, no events, and no attribution in standard analytics. You need raw server log analysis, querying directly for AI user-agent strings and reading the HTTP status codes each receives.

Used correctly, server logs function as a leading indicator of citation potential. The signals that matter are which bots are visiting, which URLs they return to repeatedly, how quickly your server responds, and whether status codes suggest access problems. A URL receiving regular visits from multiple AI crawlers with fast 200 responses is actively being treated as a data source. A URL visited once and never revisited, or one returning slow responses, is being deprioritised.

Frequency trends are particularly telling. An increase in visit frequency from a specific crawler often precedes an improvement in citation from the platform it serves. A drop in frequency, especially alongside an increase in 4xx or 5xx responses, suggests something has changed in how that crawler sees your site.

BuzzWatch LLM Traffic Analytics: per-visit data across 30+ AI crawlers, tracking bot name, HTTP status, response time, and bot score. Built by GA Agency.

Where ongoing monitoring becomes essential

A one-off diagnostic gives you a starting point. It does not give you a system. Citation patterns shift significantly from month to month as models update, as content enters and exits retrieval indexes, and as competitors adjust their strategies. A brand that is well-cited in ChatGPT responses today may see that change within weeks, for reasons that are not visible without monitoring both the crawler layer and the response layer simultaneously.

This is where the two data sources need to connect. Crawler data tells you what AI bots are consuming from your site. Response monitoring tells you what AI platforms are surfacing about your brand. On their own, each tells an incomplete story. Together, they let you identify patterns: which URLs correlate with which types of responses, whether a drop in citation follows a drop in crawl frequency, and which content investments are actually moving the needle.

BuzzWatch Monitoring and Analytics: AI Visibility Score, mention frequency trends, and competitor comparison across ChatGPT, Perplexity, and Google AI Overview.

GA Agency built BuzzWatch to connect these two layers in one place. The tool’s LLM Traffic Analytics module tracks which AI crawlers are visiting your site, which URLs they are reading, how fast you are responding, and how visit patterns change over time. This sits alongside response monitoring across ChatGPT, Perplexity, and Google AI Overview, so the relationship between what bots consume on your site and what AI surfaces about your brand becomes visible and actionable rather than something you have to infer from two separate sources.

BuzzWatch Gap Analysis and AI Visibility Scoring: where competitors appear but you are invisible, with a content planning workflow from gap identification to published and evaluated.

The core argument

The citation gap is a systems problem. It spans technical access, entity recognition, and content structure, and it compounds silently because each layer can appear to be functioning fine while quietly failing downstream. Most GEO work addresses only the third gate, which is a large part of why most GEO work produces limited results.

The diagnostic framework in this guide is not a one-time exercise. Run it against your baseline. Fix the gate that is blocking you. Then build monitoring into your regular workflow so that when citation patterns shift, as they will, you are not discovering the problem weeks after it started. The data to catch it early is already in your logs.

Want to connect your crawler data to your AI citation performance in one place? BuzzWatch is a tool built by GA Agency that tracks AI crawler activity on your site alongside brand visibility across ChatGPT, Perplexity, and Google AI Overview, making the link between the two visible and actionable. Find out more and sign up at https://buzzwatch.ai/.