RIA.com tripled Googlebot crawling, doubled bot visits, and cut problem detection time in half. The foundation was something deceptively simple: finally being able to see what Googlebot was actually doing across all five products, and JetOctopus was the platform that made it possible.

Highlights

3x

Crawl growth on RIA.com

Googlebot crawling tripled after targeted crawl budget size optimization

+200%

New pages discovered

Googlebot finding 3× more new content

+100%

Bot visits & visited pages

Doubling of both Googlebot visit frequency & total pages visited

2–3x

Faster problem detection

Log-based alerts catch critical issues before they impact visibility

About Our Client

Industry: Online Classifieds (Auto, Real Estate, Agriculture, Parts)

Company size: Enterprise – 5 major products, millions of pages each

Location: Vinnytsia, Ukraine

RIA.com is one of Ukraine’s largest online classifieds ecosystems, powering five major products, AUTO.RIA (vehicles), DIM.RIA (real estate), AGRO.RIA (agriculture), Spare Parts RIA.com and AUTOCENTER.AUTO.RIA. Each product generates millions of pages, serving a massive user base across multiple verticals where organic search is the primary discovery channel.

The Challenge

Before partnering with JetOctopus, the RIA.com team faced a problem that every enterprise SEO knows well. The tools that work for smaller sites simply collapse under the weight of millions of pages.

Their existing toolkit, Screaming Frog for crawling and a set of custom-built solutions was never designed for their scale. And as the ecosystem grew across five products, the cracks became impossible to ignore.

As a desktop-based crawler, Screaming Frog was fundamentally limited. Crawling sites with millions of pages meant incomplete results, slow processing, and constant manual intervention. For a team responsible for five major products, running full crawls was impractical and partial crawls meant partial visibility. To fill the gaps, the team built internal crawling solutions, but these custom tools broke frequently under the data volume, required constant maintenance, and couldn’t reliably process the scale of data that RIA.com’s products demanded. Instead of enabling the SEO team, the tools became a resource drain.

Without systematic log analysis, the team had no way to understand where Googlebot was spending its resources. Millions of pages existed across the ecosystem, but which ones were actually being crawled? Which high-value pages were being ignored? They were making optimisation decisions without the most fundamental piece of data, how Google actually interacted with their sites. Meanwhile, crawl data, server log data, and Google Search Console data lived in separate silos. Connecting the dots between what was crawled, what was indexed and what actually performed in search required manual effort that simply didn’t scale across five products with millions of pages each.

The Approach

In 2019, the RIA.com team evaluated enterprise SEO platforms and chose JetOctopus for its capabilities-to-price ratio compared to competitors. But the initial decision was just the beginning, what kept them on the platform for seven years is how consistently it has evolved.

JetOctopus offered what the team needed from day one, a cloud-based architecture built to handle millions of pages natively, log file analysis as a first-class feature rather than an afterthought, and the ability to merge data from multiple sources into a single, visual picture. No previous tool in their stack had provided that.

RIA.com’s SEO funnel in JetOctopus gave the team the unified view across crawl, logs and GSC data that no previous tool could provide.

Implementation:

Crawl Budget Mastery

For a site ecosystem generating millions of pages, crawl budget isn’t just a technical metric, it’s the difference between being visible and being invisible. When Googlebot wastes its limited resources crawling low-value pages, high-value content goes unvisited, unindexed and invisible to searchers.

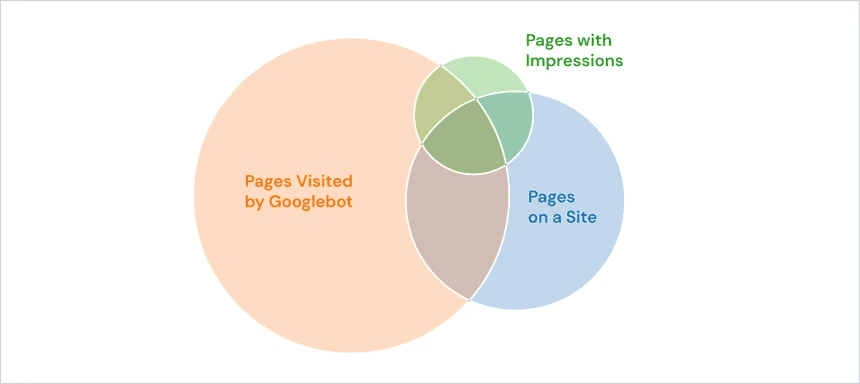

Using JetOctopus’ Log Analyzer, the RIA.com team gained full, systematic visibility into Googlebot’s behaviour across their products for the first time. They could track exactly where Googlebot spent its crawl budget, down to the page level, across all five products, and identify which sections were being over-crawled while high-value content was being ignored. Rather than guessing at optimisation, the team could see the direct impact of every change in log data. And through the SEO funnel view, they could monitor the full journey from pages in the site structure through to pages crawled by Googlebot and pages that enter the index.

Proactive Issue Detection

At enterprise scale, technical issues can surface anywhere, at any time across any of the five products. A broken template could affect thousands of pages. A misconfigured redirect could drain crawl budget overnight. And by the time these problems show up in rankings or traffic, the damage has already been done.

JetOctopus’ alerting system and log-based monitoring gave the RIA.com team something they never had before: an early warning system that works across the entire ecosystem. The team configured custom alerts to monitor critical errors, crawl anomalies, and status code spikes. They use Raw Logs analysis to catch technical errors that don’t surface in standard crawl reports. And automated crawl launches ensure continuous monitoring without manual intervention.

AI Search Readiness

As AI-powered search engines, ChatGPT, Perplexity, Google AI Overviews, reshape how users discover content, enterprise sites face a new challenge: understanding how LLM crawlers interact with their pages. Most companies haven’t started tracking this. RIA.com already has.

Using JetOctopus’ AI Bot Analysis, the RIA.com team monitors which AI bots crawl their sites, how frequently they visit and what patterns emerge. They can track LLM crawler behaviour across all five products, make data-driven assumptions about their visibility in AI-powered search results, and gauge how ‘interesting’ their content is to large language models, giving them a head start over competitors who haven’t begun to monitor this landscape at all.

The Results

Since making crawl budget optimisation a strategic priority and leveraging JetOctopus across the full RIA.com ecosystem, the team has achieved measurable, sustained improvements:

Crawl Growth & Indexation

Overall crawling tripled. Since the team focused on crawl budget optimisation for RIA.com starting in spring, the results have been significant and sustained. Googlebot crawling tripled after targeted crawl budget size optimisation — a gradual but consistent uplift tracked directly through the SEO funnel view. Bot visits and visited pages both doubled. The number of new pages Googlebot discovered grew by 200% — three times more new pages found in the site structure than before.

Issue Detection Speed

The team now detects and responds to critical problems 2–3x faster than before — catching issues before they have a chance to impact visibility. Log-based alerts now catch critical technical problems before they surface in rankings or traffic. Automated crawl launches ensure continuous monitoring without manual intervention, and Raw Logs analysis surfaces errors that would never appear in a standard crawl report.

Takeaways

Partial data produces partial results

RIA.com’s story is a blueprint for enterprise-scale technical SEO. They went from fragile desktop crawlers and zero log visibility to full crawl budget mastery across five products with millions of pages, all managed from a single platform. Without full log visibility, crawl budget decisions are guesswork. The shift from siloed tools to unified data (crawl, logs and GSC) is what made systematic optimisation possible at their scale.

Platform longevity is a signal

Seven years of continuous partnership speak louder than any metric. It means JetOctopus didn’t just solve the problems of 2019, it kept evolving alongside its client, from crawl budget optimisation to internal linking at scale to AI search readiness. For enterprise SEO teams managing large, complex sites, the lesson is clear: data-driven optimisation at scale requires tools built for scale.